Build the prototype to aid in new product discovery

In recognizing that we were finally in a position to start gathering real requirements from a prime new customer, I decided to move forward with building a prototype for a new product offering.

The Product Decision: Complete a working prototype that we could use to drive productive conversations with customers around requirements.

Flickr image source: http://tinyurl.com/pfyguao

In recognizing that we were finally in a position to start gathering real requirements from a prime new customer, I decided to move forward with building a prototype for a new product offering.

The Product team had known for some time that we had a legitimate gap in our product portfolio around an MS Office 365 Plug-In but we were not overly anxious as it was clear that the gap was not preventing the company from winning new deals. Even though our main competitor would regularly raise the issue with prospects during the sales cycle, drawing attention to our "deficiency", it had never cost us a sale.

The MS Office 365 Plug-In saga continues to unfold...

You can review the entire story through my past Product Decisions:

HEAD OFF A NEW PRODUCT IDEA BEFORE IT GAINS ANY MORE STEAM

CLARIFY WIN-LOSS SPECULATION WITH POST-MORTEM INTERVIEWS

BUILD A QUICK PROOF OF CONCEPT

From the beginning, when this "urgent need" was first identified, I had been steadily pushing back on internal stakeholders (see sidebar). I knew we didn't have the available resources or bandwidth to tackle this and without a line of customers outside my "product door" clamouring to have the new feature, I was hesitant to even start conversations around the Plug-In.

What drove this decision

In the previous quarter, we had signed our biggest customer in the company's history and, in our lengthy discussions with them around their needs, we had identified the (missing) Plug-In as a key, must-have feature.

This one customer, when fully deployed, could have more than 2,000 people using the new Plug-In. Based on those numbers, it seemed reasonable to me that we now had a decent base of users from which we could start to gather some useful insights.

The decision: Complete a working prototype that we could use to drive productive conversations with customers around requirements

I was eager to start talking with and observing real users in the field but I didn't want to do that using the low fidelity and crudely fashioned proof-of-concept we had completed a few months back. It was time to build and start testing with a real, functioning prototype.

Plan of attack

We were still very early in the product cycle for this Plug-In and there was no pressure to cut corners or skip steps in our product discovery process. I wanted to make sure we used a disciplined approach.

In this first phase, we would create a prototype that would give our Tech team more confidence in what it would ultimately take to develop and support such an app AND that would give the Product Team enough material to have meaningful discussions with the first round of users.

Vet the feasibility of our technical approach with Engineering

Flickr image source: http://tinyurl.com/p65wgqb

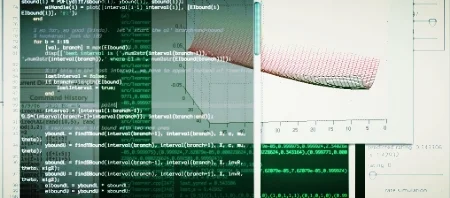

I had some solid ideas for how to create an initial version of the Plug-In though it was fair to say that we would be forging into unfamiliar territory. The Tech team had never developed this kind of app but we knew we would be getting support from our friends at Microsoft who is one of our key technology partners.

The first step in building the prototype was making sure our team could nail down some of the technology challenges that we would face. For example, high on our list were questions about user authentication, API access, and deployment of the app to customers through Microsoft's "online store".

So, over the course of a few weeks, the team completed a functioning prototype that addressed these areas of concern. Now, more confident that we had cleared the most immediate technical hurdles, I proceeded with getting some internal validation.

Share the prototype with stakeholders inside the company

I very much wanted to show the prototype to our Sales Engineers. They had always been good product collaborators and certainly understood the broad use case for the Plug-In. I set up a meeting with them and the Product team and with the Engineers to get and record some early usability feedback.

On the way to that meeting, I made a small detour to give a brief demonstration to our CEO as a courtesy “first look". He had taken a keen interest in this initiative and had been stalking me for many weeks. And even though we had only addressed the most simple use case in this iteration, he was very impressed with our rapid progress. My only regret was letting an Engineer run the demo - who uses superheroes for their sample data? Ha!

I should have known not to let an Engineer run the demo - who uses superheroes for their sample data?

We then sat with the Sales Engineers and reviewed our current hypotheses with them. They see much more front-line action with prospects in the sales cycle and helped validate our approach, often tying back to their deals from the past weeks and months.

I then had each of them install the Plug-In on their own machines to test it out. The response was overwhelmingly positive and we could see them already thinking about how they might adjust their standard demo scenarios to incorporate this new component.

One of them suggested they start using the Plug-In immediately to help them with one of their own team activities and I offered to check back in with them in a few weeks to see how it worked for that additional use case.

Show it to the technology vendor on whose platform our prototype was built

We had invited representatives from our partner Microsoft into our office for a high-level meeting to talk about Office 365 integration among other things. The timing of their visit provided a perfect opportunity for a prototype demo and to show what we had achieved in the past few weeks, with some help from their own technical folks.

After setting up the customer scenario with the group, we walked them through the working prototype and talked through some of the challenges we had worked through to get to this point. The Microsoft team provided additional validation around our approach for the Plug-In and gave us some pointers for how we could move forward. Our remaining questions were captured and would be carried back to their teams to help get us the answers we would need before rolling this out, even to beta customers.

In the end, I was pleased to know that our partner was ready to help us with next steps. They also expressed interest in the upcoming deployment with our new customer as it would provide a great case study for both us and them.

The impact

With the Engineering team, I was able to work through a few of the big technical unknowns to build a working version of the Plug-In. By meeting with and showing off the Plug-In to a few internal resources, we were able to get some early, but solid validation. And in reviewing the finished work with the vendor on whose platform we had built the Plug-In, we confirmed that we were on the right track in how we were going to deploy and support the new product.

But none of this would be worth anything if we didn’t immediately start validating the Plug-In with actual users. That work would likely start the very next week and is lining up to be the next chapter in this series.

Look for more reports from theProductPath around product validation, feasibility, and product investments here on PM Decisions.

More articles from our blog PM Decisions

Celebrate product wins big and small

It's easy and obvious for everyone to come together and share in the company's larger victories like closing a big deal, but I decided to devote some time to acknowledge, if not revel in some of our minor triumphs too.

The Product Decision: Recognize and applaud the lesser product successes too.

Flickr image source: http://tinyurl.com/ppparbe

It's easy and obvious for everyone to come together and share in the company's larger victories like closing a big deal, but I decided to devote some time to acknowledge, if not revel in some of our minor triumphs too.

Last month, we executed the largest sale ever in the company's history. And while there was no small amount of boisterous high fiving going on over in Sales, it was a great opportunity to bring all the departments together and spend a few moments cheering as a single group.

A big product win too

I was celebrating of course but for a slightly different reason. I was particularly encouraged by this deal because of how it reflected on our current product. It would seem that we had met the customer's needs exactly. After concluding a long sales cycle (not uncommon for an enterprise software company), we signed a contract with the customer without having to promise any changes in the core product or adjustments to the roadmap (quite uncommon for an enterprise software company).

Rarely have I been able to avoid the uncomfortable product roadmap discussions with well-meaning prospects on one side and hovering, commission-obsessed sales reps on the other, trying to move the deal along by finding the right words that would address the urgent needs for "missing" features.

Product Managers dream of building the exact right product for their target customers and when a big one lands, you have to feel good about it.

So this was indeed a victory for Product and also for our dear friends in Product Marketing. Product Managers dream of building the exact right product for their target customers and when a big one lands, you have to feel good about it. But those victories can be short-lived as all eyes inevitably turn toward the next challenge.

What drove this decision

It is hard to say if or when we'll ever top this milestone, but it was another event that happened this past week that made me stop and think about the smaller accomplishments that also deserve recognition.

We had recently rolled out a new product which has been well-received by customers. One, in particular, an early and avid adopter, had recently become frustrated when a change to their own production environment temporarily "broke" our app. They called up and asked us to help remedy the problem because they couldn't go back to the way things were before using our app.

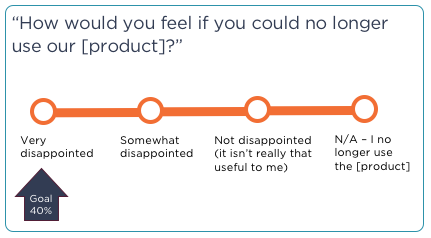

I immediately thought of that great 1-question survey popularized by Sean Ellis that helps Product people test for this exact outcome: if enough users respond by saying that they would be "very disappointed" if they were not able to continue using your product, then you should feel confident that you are the right track.

We had experienced that exact outcome and even if it was only 1 customer, I still call that a victory! And I wanted to play up those wins with the troops too.

The decision: Recognize and applaud the lesser product successes too.

As a senior team member in the company, I have more visibility than most when it comes to departmental activity. I see the accomplishments being made every day and now I wanted to share those positive results with the appropriate parties who were not always directly connected to the action.

Plan of attack

My loose "plan" was simply to keep an eye out for opportunities where I could funnel reactions and reports back to the folks who would otherwise not hear of them. Along the way, I was a little curious to learn that there were not enough regular channels or venues for doing this and that sometimes, I had to get a little creative.

Share glowing feedback from a significant product demo

Early in the week, I delivered a custom product demo for an important, external party who I would position somewhere between future business partner and potential investor. Several times during the demonstration, I received compliments including an amazing, unsolicited comment from one of the women who was "weeping a little," wishing she had had the chance to use our product while working for her previous company.

At the very next Engineering standup meeting, I relayed the flattering remarks with the team. Some rolled their eyes at the glowing hyperbole, but I could tell they appreciated the message.

Appreciate engineering feats

Flickr image source: http://tinyurl.com/olfywby

Later in the week, we reached what I called a golden spike moment, referring to the 19th-century achievement of connecting the two sections of the First Transcontinental Railroad. In our case, two Engineering teams had connected a major new feature with its corresponding new configuration page.

We were now able to demonstrate how to build a new configuration and then immediately use that to launch the end-user tool. Achieving this (long overdue) task meant that we could now address a key pain point for our customers. I celebrated by delivering artisanal pastries to the teams at the sprint planning meeting.

Join in the "New features" huddle

The Product team was very excited to have rolled out two new, high-profile features in the last major release. However, we knew to temper our enthusiasm as it often takes several weeks or even months before customers latch on to and begin utilizing them in any significant way. Such is the pace of B2B software.

But one early indicator of success is when our own internal implementation team picks up the new stuff and starts incorporating into their projects. During this same week, we were pleased to see our folks schedule a huddle to share some early tips and best practices with each other - a true indication that we had delivered something of value.

I invited the Product team to listen in on the discussion and together we basked in the indirect validation as we heard stories about how the new features would ultimately make their jobs easier and deliver better value for our customers.

I celebrated the win with the Product team over a nice lunch (which I ultimately turned it into a working lunch to conduct a customer journey mapping exercise - ha!)

The impact

You don't need always need to celebrate with free food, but I have found that people do like to feel appreciated. Our teams have certainly responded to the positive reinforcement and I will continue to keep an eye out for opportunities to share good tidings.

Look for more reports from theProductPath around product culture, product feedback, and validating products here on PM Decisions.

More articles from our blog PM Decisions

Conduct high-priority product research

To address one of the most critical problem areas for customers using our software platform, I decided to roll up my sleeves and conduct the fieldwork myself.

The Product Decision: Enlist the help of our User Research expert to coordinate formal interviews with a suitable group of administrators and end users from our active customer base.

Flickr image source: http://tinyurl.com/pmvvurb

To address one of the most critical problem areas for customers using our software platform, I decided to roll up my sleeves and conduct the fieldwork myself.

For many months, we had been recording feedback from our customers, some direct, some indirect around one particular area of the product. Everyone agreed that we had delivered really powerful features - the common challenge was in the setup and configuration. We had made it too complex to use this valuable piece of the product!

I couldn’t agree more. I knew how hard it was, even for more technical users to configure the tools. I have a deep programming background but even I would struggle trying to ramp up on proprietary schemas with cryptic configuration syntax and poor documentation.

We had been attempting to compensate and even head off frustrated customers by offering to have our own Professional Services team help get customers up and running. But that only served to delay the inevitable as the complaints would then change to “even if you build it for me the first time, I’m not confident I can take it over and maintain it!"

What drove this decision

I had been anticipating a suitable window of time where we could focus on revisiting and rethinking these problems. The teams had been making good progress on some of the pre-requisite components that would be leveraged to rebuild this key feature of our platform. It was time to initiate a round of interviews with customers about how they use the current product, what (if anything) they liked about it and what (again, if anything) they could point to that causes problems for them or their end users.

I wanted to take full advantage of this opportunity to get out of the building, to speak directly to users and reinforce this important research activity with the members of my Product team. Mostly though, I wanted to improve our product and make our customers happy.

The decision: Enlist the help of our User Research expert to coordinate formal interviews with a suitable group of administrators and end users from our active customer base.

I am fortunate to have a talented resource on our team who has raised the bar around user research for our entire company. She continues to guide us all on the finer points of interviewing users early on around product discovery and later on for product usefulness and usability. With her by my side, I felt very confident that we would get to the crux of the problems and ultimately, be able to prioritize the work to come.

While I was eager to get started and optimistic about what we would learn, I heeded my colleague’s advice and adhered to the plan we made to ensure a favorable outcome.

Plan of attack

It was so tempting to just start writing requirements. But I've been wrong before.

I have used our product internally and I have also demoed it hundreds of times to customers so I felt confident that I knew what was wrong and what would need to be fixed. It was so tempting to just start writing requirements.

But I’ve been wrong before. Let me say that again, I’ve been wrong before. I have felt strongly about how our product should work and went straight to the Engineers with requirements only to find out, after they had spent valuable time implementing the stories that there were no customers lined up to use the new enhancements.

Start with hypotheses and a good prototype

Because I was so familiar with this particular part of our platform, I was able to quickly compose a lengthy list of hypotheses of pain points where I would expect to hear complaints from both heavy and casual users:

- We believe Admins are struggling to initially configure a URL to set up a new instance of [tool] in environments like Salesforce.com.

- We believe end users are frustrated with the number of clicks required to complete the ... process, especially if only one document is necessary.

- We believe Admins are resisting adopting and expanding their use of [tool] because it lacks good error handling, is difficult to test, and has too few options for controlling its behavior for the end user.

- We believe end users struggle with scrolling and otherwise navigating through the different areas of the page, e.g. with long lists of templates or with very long data forms and may find it helpful to track incremental progress as they work through sections of a longer form.

- We believe end users are underwhelmed with the stark layout of the [tool] page, the lack of branding, and the lack of any help text.

- We believe end users struggle with using the [tool] with different browser window sizes, specifically when the buttons move around when the page is resized.

- ...

I then had the UX team mock up a clickable prototype that addressed some of the primary concerns and that would allow us to better engage with our interviewees.

Recruit and track participants

To build a list of participants, I first reviewed the customers who were already part of our formal “labs” program (always be recruiting!). Then, I put the word out to our Sales and Professional Services teams to identify other viable customers with whom we could talk. The resulting list was manageable enough for us to track using a shared spreadsheet that included contact information, interview dates, and miscellaneous notes.

Flickr image source: http://tinyurl.com/nomqgdl

| Contact Names | Organization | Preferred Phone/Email | Participation | Last Contact Date | Future Participation | Primary Internal Contact | Notes |

|---|---|---|---|---|---|---|---|

| Nellie Bluth | The Bluth Company | nbluth@thebluths.com | Asked to be included in Fall research initiatives | 7/12/15 | Also willing to help with... | Maggie Lyes, Acct Mgr | Has been helpful in the past but admits to not being technical |

Conduct and record the interviews

When we had accumulated a sufficient list of suitable users, I crafted and sent out an email invite along these lines:

“Hello Vincenzo, I would like to enlist your help for a product research initiative.

I head up the Product team and am leading an active project to learn more about how customers are using our tools. I am reaching out to you because your group is currently using [tool]. I believe you would be able to provide some great feedback on what is and isn’t working well.

If you are interested in participating, the next steps are easy:

1 - Reply to this email with some available interview times over the next 2-3 weeks.

For example, “Tuesday mornings are better”, “tomorrow”, “Wed/Thurs between 3-5pm Pacific”.

2 - Prepare to walk us through how you or your end users use [tool] today

This is a great opportunity to tell us about your successes with [tool] as well as things that would make it easier for you. The conversation should not take more than 45 minutes.”

Not surprisingly, our more fervent users responded immediately. With the others, it sometimes took a little more effort to secure the interviews. We mostly used virtual web meetings to conduct and record the interviews where the customer did most of the driving while we watched.

Our agenda for the interviews followed this path:

- Show us how you use the product today [50% of the interview time was spent here]

- As an [administrator, end user], what would you say if anything, are your favorite things about [tool]? [10%]

- What, if anything causes you or your users problems when using [tool]? [20%]

- Switching gears, we'd like to show you our ideas for a new design... [20%]

I had an intern transcribe notes from the recordings, putting all the material in a central repository so we could index it properly and share it with the rest of Product and Engineering.

The impact

The customer interviews went smoothly giving me exactly what I had hoped to obtain. There was plenty of validation around our current shortcomings, some surprises about certain attributes that users found valuable, and an abundance of constructive griping. The early prototype seemed to be a hit with users and gave me additional confidence to pursue that path.

I expect to deliver a first round of product improvements in the very next release and will continue to engage these customers to validate our efforts. Look for more reports from theProductPath around customer research, product validation, and feature prioritization here on PM Decisions.

More articles from our blog PM Decisions

Respond to unhappy customers

To learn more about post-purchase sentiment, I decided to engage with our Customer Success team and become more aware of where our users were struggling.

The Product Decision: Carve out time to listen to and understand some of the problems customers were having with our product and to help identify ways to address each distinct concern.

Flickr image source: http://tinyurl.com/orb4qg5

To learn more about post-purchase sentiment, I decided to engage with our Customer Success team and become more aware of where our users were struggling.

I will admit that my Product team and I tend to spend more time interviewing and talking with amicable customers. That is not to say that we only approach our happy users; in fact, a fair number of the research-oriented conversations have been tempestuous. Indeed, through our collective research efforts over the past year, we have collected quite a bit of product feedback that would land well on the other side of "needs improvement".

But in this exercise, I wanted to turn my attention specifically to customers who were in peril. And I wanted to go straight to the front lines.

What drove this decision

In addition to what we were learning from the interviews we had been conducting with customers and prospects around new features and enhancements, I also wanted to know more about our product's pain points. And I thought that a great way to do that was to talk with customers who were currently lodging their complaints with our Support team.

The decision: Carve out time to listen to and understand some of the problems customers were having with our product and to help identify ways to address each distinct concern.

In span of a single week, I was able to participate in three separate support cases. I got a chance to hear, in some cases first-hand, from customers who had legitimate complaints about:

- An unacceptable implementation scenario

- An (unintentional) product feature gap

- A grueling Administrator on-boarding experience

These scenarios afforded me with a good cross-section of problems to explore. One was griping about our product's inability to satisfy their complex security problem. Another had caught a change we had made in a recent release that legitimately "broke" one of their use cases. And a third was trudging through our formal training course and was beginning to grasp all that he would ultimately have to learn to support his own company's solution.

Plan of attack

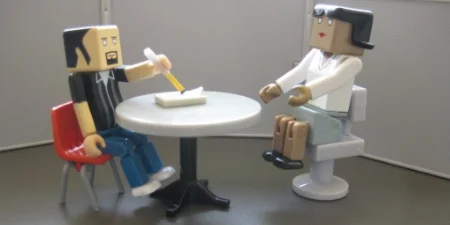

I was intrigued to have three customers with three very different concerns. My process for each was similar however. I spoke with Customer Success person who was interfacing directly with the users, tried my best to assess the particular situation and made sure I understood each customer's own challenge.

In the end, I helped to resolve each situation but with three very different outcomes.

Scenario 1 - "Fire" a customer for which there was truly no fit

My first conversation revolved around a newer customer who had been planning to go live with their implementation in the next few weeks. In working through their initial project, however, they had determined that they needed a much more sophisticated security scheme and were now struggling with that part of our platform.

It did not take me long to recognize we were at an impasse.

Somewhere along the way, we had clearly misled this particular customer. We had since exhausted all the obvious and even more creative options and I confirmed that we would not be otherwise enhancing the product to support their unique use case.

It did not take me long to recognize we were at an impasse. Looking to quickly resolve the situation, we made the tough decision to rip up the customer's contract and return their money.

Scenario 2 - Escalate a support ticket regarding a product oversight

My next conversation was much different. Here a long-time customer had submitted a complaint with our Support team about a small feature that was removed in a recent product release. It was clear to me that we screwed up.

It was clear to me that we had screwed up.

After hearing their scenario, I agreed and admitted that we had inadvertently taken away a small piece of functionality that they had been relying on. I recommended that we escalate the Support ticket to trigger a feature enhancement request that would bring back comparable functionality to help restore their solution.

Scenario 3 - Meet face-to-face with an overwrought Administrator

And finally, toward the end of the week, I was made aware of another new customer who had been floundering a bit. The designated "administrator" at this company, who was in charge of making it all work was currently enrolled in our (highly recommended) training class to learn the ins and outs of our software but was laboring under the breadth and depth of the course material.

Flickr image source: http://tinyurl.com/qyuf6pa

In truth, the original implementation project was poorly scoped and was running over budget. It was clear that more time would be needed to complete the work. But the Administrator was becoming even more unsure about his ability to ultimately support his own company's users when the solution was finally deployed.

I scheduled an hour meeting with him after the training class (we actually talked for more than 90 minutes) and listened to his concerns. After some healthy venting, he ultimately agreed that none of his immediate doubts were tied to the platform itself. Instead we decided to revisit the original statement of work and work with Sales and Professional Services to properly expand the scope of the project to fit his organization's needs.

The impact

Looking back, I am not sure if my products are better off as a result of these interactions. At the end of the week, I had been engaged in three unique customer scenarios and delivered three different resolutions:

- Defend the product, even if it meant losing revenue and risking our reputation

- Admit our product mistakes and try to earn back the customer's trust

- Reset a (new) customer's product expectations and help them find a successful path forward

Look for more reports from theProductPath around customer research, product validation, and voice of the customer here on PM Decisions.

More articles from our blog PM Decisions

Build a quick proof of concept

After realizing that I had failed in my previous attempts to head off a questionable new product idea being advocated by our head of Sales, I decided to fabricate a bare bones working example to advance the conversation.

The Product Decision: Outsource a simple prototype to an overseas development partner to avoid disrupting the in-house Engineering team.

Flickr image source: http://tinyurl.com/o8lbm83

After realizing that I had failed in my previous attempts to head off a questionable new product idea being advocated by our head of Sales, I decided to fabricate a bare bones working example to advance the conversation.

To be honest, I was more than a little intrigued by the new product being proposed, not because I thought it would prevent us from losing deals to our competitors - to my knowledge, we hadn't lost any yet, at least not for this supposed product gap. And it wasn't because the technology was particular fascinating or exclusive - in fact, many of our customers could likely build a lightweight version of this on their own without much trouble.

I'd have to say my curiosity was driven by the opportunity to create something new.

Our company has been building a large software platform for over a decade and it increasingly becomes harder and harder to innovate when you're towing along such a hefty code base.

One of the classic mistakes companies can make is to blindly pursue building a new product just because it is feasible for them to do so.

But I wasn't going to let my own fascinations cloud my judgment. One of the classic mistakes companies make is to blindly pursue building a new product just because it is feasible for them to do so. I know better than that and I didn't want to carelessly lead my team down the wrong product path.

The next step then, was to find a way to better validate the claims coming from our own Sales team but to do so with the minimum amount of effort and investment.

What drove this decision

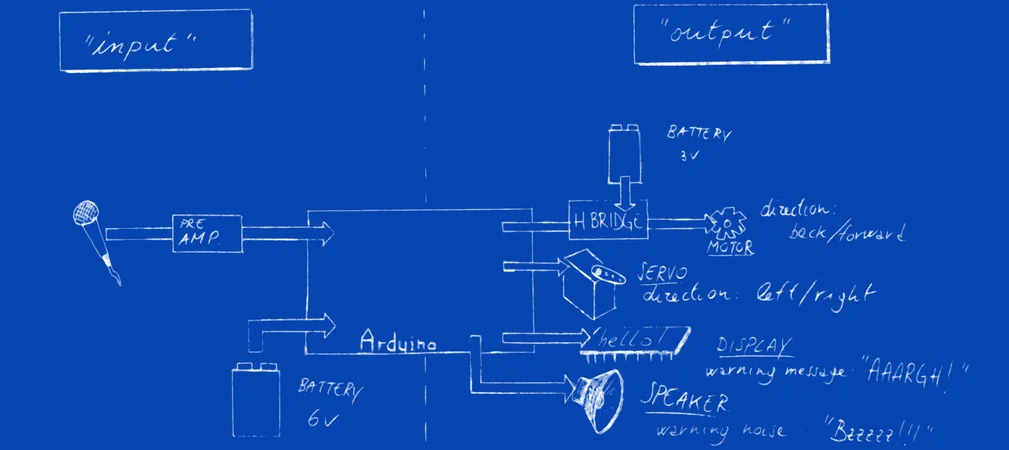

This is a simple chart I created to help drive more meaningful conversations with our Sales team. I have yet to get them to pin down all of these numbers for a given "missing feature" but in their defense, we don't really collect and track sufficient/suitable data in our sales process to drill down this far. Still, this model does help to frame product investment decisions.

Our Head of Sales was steadfast, still convinced that our product had to have this missing functionality, mostly because our chief competitor was flaunting their own version in front of our prospects. I had tried - and failed - to squash the idea before it gathered any more steam. It was becoming clear to me that I would have to dedicate more cycles to this product proposal even if the end result (i.e. not moving forward) was the outcome.

My chief problem, however, was that our Engineering team was booked solid for the next few sprints and I didn't want to distract them with a side project that was still a long way off from being productized.

I needed a way to make some incremental progress with the new product idea without impacting the team's current development velocity.

The decision: Outsource a simple prototype to an overseas development partner to avoid disrupting the in-house Engineering team.

Giving a new project to an external team can be tricky. My goals were to properly scope the work up front, produce the desired results quickly, and have a clear path forward when the project was complete.

Plan of attack

I was focused on reducing the size of the project to the point where I had what I needed to start validating the product with our end users and prospects. That meant more than just providing good requirements however. I also needed to give the remote team the support it would need to complete the job and deliver the goods.

Define basic requirements and allowable shortcuts

One nice thing about sharing early prototypes such as wireframes or mockups is that your intended audience will often be very forgiving. I have found that when provided with sufficient clarification, users will focus on what's there and not obsess about what's not yet there.

I wanted to make sure that the proof of concept had enough relevant functionality in place to confirm our initial hypotheses. I also wanted to identify places where we could safely skip over steps that would have to be part of the final solution. These include the compulsory but more technically challenging operations like installation and authentication.

I wrote and delivered the requirements to the remote team being careful to emphasize the elements that would have to perform accurately for the end user. I then highlighted the remaining elements that didn't contribute directly to the end user experience where I felt we were safe in taking time-saving shortcuts (i.e.. "just hardcode it for now").

PROVIDE TECHNICAL SUPPORT TO TROUBLESHOOT the remote work

Our remote development team had been working with the company for several years but some new faces were joining this project. To make sure they could ramp up quickly and avoid any first-time stumbling blocks, I recruited a few key internal, technical resources to help launch and support the effort.

As it turns out, the remote team did hit some stumbling blocks in their attempts to use our new API and in resolving those issues, we actually uncovered - and fixed - some problems with our own API deployment process!

Secure the POC artifacts for the next round

When the proof of concept was finished, I had the remote team demo it to a few folks from our Engineering and Product teams. We were all pleased to see the results and I felt confident that we now had a suitable working model to go back with our Sales team and begin engaging customers.

The impact

I had already expressed my doubts to the Sales team about the actual effects of adding a component like this to our product mix - specifically, that it would not significantly advance competitive deals that are in jeopardy. With a working proof of concept in our hands now, it would be easier for us to determine exactly what (would-be) customers were looking for and whether this would affect their buying decision.

I would still have much more work to do in building a truly shippable product if I was wrong and customers latched onto this proof of concept. But I am convinced that I made a good tactical decision at this point and that we would realize a good return on this particular product investment decision.

Look for more reports from theProductPath around product validation, feature prioritization, and managing stakeholders here on PM Decisions.

More articles from our blog PM Decisions

Cut down the scope of the initial product by 20%

After receiving push back from users in the first rounds of testing, this startup decided to eliminate a significant portion of its product offering.

The Product Decision: Drop an entire section of the user workflow.

Flickr image source: http://tinyurl.com/pg4zl82

After receiving push back from users in the first rounds of testing, this startup decided to eliminate a significant portion of its product offering.

For the past year, I have been observing the early stages of new business, started here in Chicago. The technical co-founder, a close friend, has also been serving as the Head of Product, not uncommon for small companies. I have been keenly interested to watch how this team would bring their vision to market and all the twists and turns that path would take.

One interesting development occurred after they put their initial version of the product in front of their target users. The feedback was immediate and in my opinion, quite sobering. The team would seem to have gone too far in one direction and was now facing a critical decision to scale back the initial scope of its product.

What drove this decision

“This is too hard. I don’t want to create more work for myself or my client.”

The originally envisioned product was intended to utilize a 3-step process. Users would complete a high-level questionnaire followed by a more detailed assessment to produce a curated product catalog where they could then make purchases in a familiar e-commerce scenario.

After developing a reasonable first pass at each of these components, the team put the product in front of a sample set of target users. The feedback was prompt and to the point: this is too hard.

The team had to make a change.

The decision: Drop an entire section of the user workflow

The (initial) users had spoken. The workflow had to change. It had to be simpler to complete. This startup team needed to step back and review the customer flow.

Plan of attack

The team ultimately decided to refactor the 3 step process and eliminate 1 of the questionnaires. After that, it would be time to test again:

- How would the new process change the results?

- Was there still a clear path to the purchase?

- What would the users find challenging about the new workflow?

Rethink the customer flow

The refactoring exercise began by decomposing the workflow process into finer grained steps, then exploring alternative paths for leading the user to the product registry at the end. The team focused on keeping what was needed most and trimming away the rest.

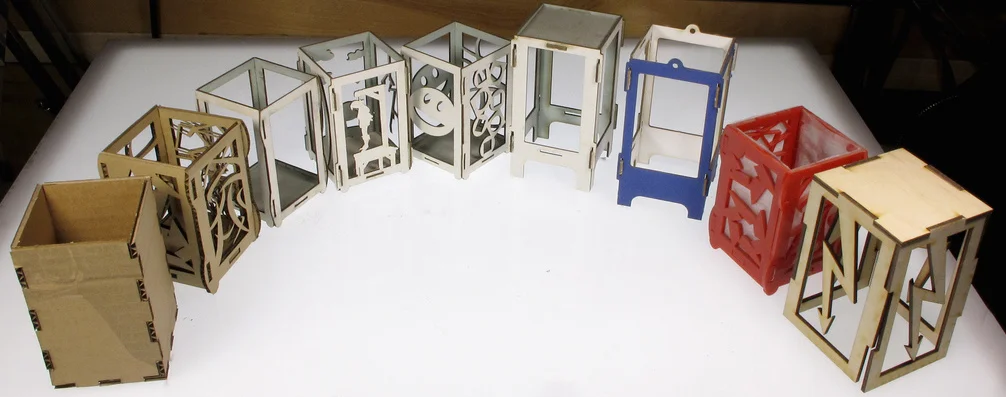

Redeploy the process with 1 fewer components

Having established the revised flow, the team proceeded to put the pieces back together to create a more streamlined activity for the users. The objective: a simpler process with fewer steps that produced the desired outcome.

Image source: https://www.flickr.com/photos/mmetcalfe/3874486791

Retest with users

Armed with an updated and improved product, the team scheduled the next round of user testing to validate their work.

The impact

Ultimately the team verified that their users could successfully complete the process without the extra steps. It takes courage to put an unfinished product in front of your customers and even more guts to go back to the drawing board when you get frank criticism.

Removing working code from a brand new product can be hard, especially if you look at the work required to build that code as having been wasteful. But I would argue that learning early leads to less waste. I applaud the startup team and encourage all product-minded people to follow their lead.

Look for more reports from theProductPath around product validation, feature prioritization and voice of the customer here on PM Decisions.

More articles from our blog PM Decisions

Evaluate a product integration with a potential partner

After some considerable prodding from the Sales team to help advance the largest deal in the pipeline, I decided to formally assess the key vendor integration options that could help us win the business.

The Product Decision: Create a graphical model highlighting the integration points for the customer's use cases to help validate a new partnership.

Image source: https://www.flickr.com/photos/emanuelec/5869072769

After some considerable prodding from the Sales team to help advance the largest deal in the pipeline, I decided to formally assess the key vendor integration options that could help us win the business.

It is rare that any single product can wholly satisfy a customer's needs and quite common that multiple products will be used in concert to get the job done. Cloud-based products for example, like the one my company offers, are often combined to accomplish set of use cases. Think of opening your Dropbox files in Microsoft Office 365 to make changes. Or pulling Flickr photos into a Slack channel to help the team troubleshoot a problem.

Through an investment in both SOAP and REST APIs, we have made our cloud product open to integration as well. We often point partners (and customers!) to these full-featured web services when we encounter requests for functionality that hasn’t been productized. But since it may be necessary to initiate the integration from our end, we also provide hooks in the platform for outbound calls.

I’ve always been intrigued with software integrations. Part of that interest is derived from exploring the forethought that goes into making any software product extensible and the rest is observing how the combination of two or more products results in a new creation that may never have been seen before.

What drove this decision

Sales was getting anxious - more anxious than usual

We had been salivating over a large opportunity in the sales funnel, a big deal in the hopper - a hit-your-quota kind of transaction. For weeks, the Sales team had been working hard to address the prospect’s long list of requirements and to zero in on the areas where there was lingering concern.

This particular prospect was asking for more functionality than we could - or would ever likely provide. The Sales team was eager to determine whether another vendor could be introduced into the deal (always a risky proposition) to fill the functional gaps.

It was now my job to confirm whether or not this was technically feasible and if so, how it would be done. But in order to have all the parties understand the proposed solution which would likely be unfamiliar and quite technical, I was going to need to explain it with some pretty pictures.

The decision: Create a graphical model highlighting the integration points for the customer's use cases to help validate a new partnership

Joining the conversation late, I needed to orient myself to better understand the other vendor's product. I set up a few phone calls with both the vendor and the prospect, had some internal discussions with the Sales team, and scribbled a lot of notes. When I thought I had a good, initial model, something worth sharing, I polled each of the groups again, passing around a 1-page diagram where I had squeezed some boxes and lines together to create a crude chart.

These early rounds of validation prompted some tweaks to the diagram and I was able to tighten down the overall integration story. What also became clear was that, to make this happen, some non-trivial product investment would be involved on both sides. I knew we would soon be having a number of internal and external “business case” or ROI-type discussions and that the crude chart would not suffice. I decided to step it up and invest in a more robust model.

Plan of attack

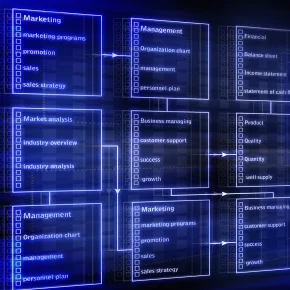

My goal with this improved model was to capture and communicate the concepts that would best help with the downstream decision making. This meant not just determining the number of integration points to fulfill the customer's use cases but the relative complexity and level of effort of each, as well. Clarifying to all parties which use cases required which integration scenarios meant that we could better prioritize the relative work involved.

HIGHLIGHT the origin of each integration point

As I reviewed the use cases, I looked for places where the functionality of one of the products ended and where it needed to be picked up in the other product. This made it easy to determine which of the products would initiate a new integration scenario. On the diagram then, I drew uni-directional arrows between systems that are themselves, usually represented as fancy boxes.

I usually label the arrows with numbers or text to help direct the reader to the start of the flow. I also find it valuable to identify the “trigger” itself. For example, “a user clicks the upload button to start the process” or “the process is initiated when a user is added to the address book” or “each new PDF document added to the folder will initiate a call to the other system."

When more than one back and forth call is required to accomplish a particular scenario, the diagram get more interesting. Does the calling application wait for an immediate response (synchronously) or will the reply come back some time later (asynchronously)? What happens when the response never comes back - which party is responsible for resolving the loose ends?

Identify dependencies

In identifying the origins of each integration point, I’ve invariably highlighted new dependencies between the products. Dependencies must be evaluated carefully because changes to the callee’s interface will affect all callers. It is common for Product Teams to update their interfaces (we do it with our own web service interfaces) and must consider backward compatibility to minimize the impact on existing integrations.

For the sake of simplicity and because these would start to clutter up the sample diagram, I’ll skip over the security considerations, the deployment models (e.g. desktop, on premise, private/public cloud, etc.) and any differences in how each company licenses their products.

Identify alternatives for each Integration point

As the saying goes, there is more than one way to skin a cat. This can apply to product integrations as well. I always look to indicate any possible alternatives that I discover so that the decision makers can better evaluate the full range of possibilities - even if the alternatives seem less practical at first.

For each alternative, I try to include the relative levels of effort, costs, etc. I usually have a good reason for choosing the primary option so I spell out that rationale for my first choice. Included in the rationale could be viability concerns about the integration technology choices, the types of connection(s) required, overall speed/performance, error handling, etc.

Distinguish between what's available now and in the future

In this particular effort, I learned that some of the integrations would be dependent on features that the other vendor had yet to release. To highlight that important variable, I made special, prominent notes about what would be feasible today vs what was yet to be built to support the integration.

The impact

I delivered the updated, more formal integration model to the teams and continued to participate in the partner discussion for a few more weeks. After both vendors had settled on a viable approach, we jointly presented to the prospect. The additional work to provide an integrated solution was spelled out along with the additional cost, some of which would be subsidized by the vendors. The final proposal, that included a modified version of my diagram, was well-received.

I often create and share models like this to weigh important decisions. I find it easier to grasp the complexity of a proposed solution when you are able to visualize the number of boxes and lines. Unfortunately, a diagram can't always communicate the longer term return on investment of a product integration. It doesn't tell you how many customers will use it or when you can expect sufficient revenue to cover the implementation costs. But that's another article....

Look for more reports from theProductPath around product validation, charts, and product strategy here on PM Decisions.

More articles from our blog PM Decisions

Getting ready to groom

In recognizing that too many of our user stories were not truly ready to be implemented, I decided to revisit the entire path to grooming.

The Product Decision: Formalize the team's story preparation process leading up to sprint planning.

Flickr image source: http://tinyurl.com/ogerhjp

In recognizing that too many of our user stories were not truly ready to be implemented, I decided to revisit the entire path to grooming

For months, I had been watching the Product team wrestle with getting their particular features out the door and in front of our customers. And it wasn't just one PM who was struggling or an exceptional delay in delivery - it was borderline chronic. More often than not, I could trace the issues back to poor preparation by the Product team.

I have been working with our entire tech team to continuously improve our own Agile development process and we certainly have more work to be done. But the problems I needed to address were further upstream, before the stories entered the Engineering and QA queues. The Product team was creating unnecessary thrashing by failing to do our part well.

What drove this decision

When your product releases are scheduled less frequently (think months apart vs. weeks), there is a greater impact when you miss a delivery date. I was frustrated at having to drop features from a release because they weren't ready or seeing enhancements slip to the next sprint.

And while it is tempting to spread the blame across the myriad variables that go along with shipping product software, I became convinced that my own Product team had to step up to support our share of the contract.

Some product planning failures are easy to measure. Are features not being completed? Are your stories slipping over successive sprints and even releases? Are your Engineers and QA teams stalled waiting for last-minute clarifications around designs and requirements?

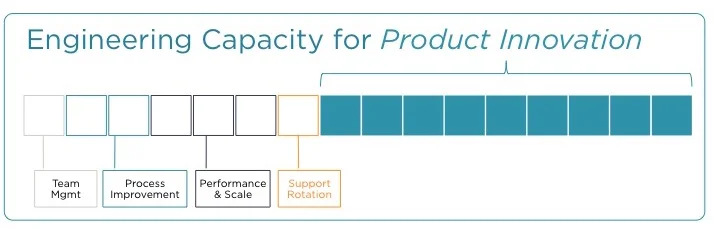

The decision: Formalize the team's story preparation process leading up to sprint planning

The bad/good news was that we had accumulated sufficient instances over the past few months to see some a pattern emerging. Early on, we tried using note cards and a whiteboard to better track the larger product initiatives over sprints and releases. This seemed promising at first, probably because the team appreciated an exercise that was both tactile and collaborative. But we ultimately failed to capture any incremental advancement of any initiative using the cards. Moving the cards across the board from sprint to sprint didn't make us better at preparation and worse, this exercise did not help us identify deficiencies in the planning process.

We needed a different approach, one that helped us focus on maturing product requirements BEFORE we gave them to the team. We needed a better product grooming process that preceded the actual backlog grooming sessions.

Plan of attack

The tactics described below were prescribed only for our larger product initiatives and would likely be overkill for bugs and smaller feature enhancements. We began by focusing on the problems that occurred most frequently, then folded in additional steps to mature the pre-grooming process.

STEP 1 - Include the fully baked UX designs

Perhaps the most obvious problem for our team was that we were often scrambling to get our front-end designs in place by the time the Engineers were ready to estimate the level of effort. We had become less and less stringent about completing the UX work and ensuring that formal designs were attached to a story or epic prior to backlog grooming or worse, sprint planning.

The lowest hanging fruit, then, was to re-establish this specification for all our user stories. Going forward, no stories would be allowed into a backlog grooming session unless the designs were complete. Obviously, there is no real definition of "fully baked" as teams will inevitably tweak designs during development and even testing but this was a relatively easy first stake in the ground.

STEP 2 - Review the acceptance criteria with internal stakeholders

I'm of the mindset that you can never stop improving on your ability to define product requirements. I encourage my Product Managers to continue to work on this skill with each new assignment. But there is an easy to tell whether you are actually improving or not by gauging how often your stories are being kicked back by the Engineering or QA teams.

Flickr image source: http://tinyurl.com/le8jhxv

And even if you are scoring well with these groups who are much closer to the code, you probably have other stakeholders inside the company that can help you measure your aptitude. In our organization, the Sales Engineering and Professional Services teams are great early sounding boards. Another important group (for us and most organizations) is the Customer Support team. Each of these groups is intimately familiar with your customer base and can help validate how well you have done in capturing the happy path(s) and the larger set of user variations.

In our updated process, we have a specific gate for reviewing stories or at least the acceptance criteria with our internal stakeholders. This has the additional benefit of not catching them off guard during a Release Preview for example, when the feature is already "complete".

STEP 3 - Weave in usefulness testing with end users

When it comes to testing products with end users, excuses usually outnumber opportunities. Like most Product teams, we would prefer to have more time to spend with our customers but scheduling these interactions always seems more difficult that it should be. Still, the team and I firmly believe this is a critical part of the grooming process - especially as the requirements and designs come together.

Going forward, we have made this an explicit stage in the lead up to backlog grooming. Of the steps listed here, it is the most challenging to implement but will likely have the biggest payoff. I can think of nothing better for removing doubt and minimizing surprise than having end users validate your product throughout your development process.

The impact

The Product team is still adjusting to the new approach although we all recognize the improvements that will come with better story preparation. We've already seen some of those benefits: in the past few weeks, we withheld a number of stories from backlog grooming that had not met the new criteria, saving us hours of guaranteed thrashing with the Engineering and QA teams.

Additional refinements will be necessary. As we advance and extend our customer research and user testing, we will introduce new gates in an effort to further solidify the product features and push them through our Agile process.

Look for more reports from theProductPath around product validation, backlog grooming, and product culture here on PM Decisions.

More articles from our blog PM Decisions

Pull a large MVP-less feature from the next release

In recognizing that an important but poorly scoped product initiative was slipping further behind, I decided to push it farther back on the roadmap.

The Product Decision: Drop the feature from the next major product release and reinvest in some proper scoping.

Flickr image source: http://tinyurl.com/pl7xl4w

In recognizing that an important but poorly scoped product initiative was slipping further behind, I decided to push it farther back on the roadmap.

I would argue that our organization is actually quite Agile-minded but we have more work to do before we can start scheduling product releases in units of weeks rather than months. In our current state, we have to treat releases with a bit more care as they don't come around that often. So when we target a major product initiative to be completed and deployed in a future release, it is critical that we drive toward the date. Any slip could delay the feature by a month or more and that can have significant customer implications.

I had recently given one of the PMs on my team a real juicy assignment. We had determined, through some solid customer research, that one of the core features of the platform was in need of an overhaul. In matching up usage data with anecdotal user feedback, we recognized that the problems would require more than a few simple enhancements. This was indeed a large product initiative and was lining up to be one of our strategic product achievements for this year.

One of the core features of the platform was in need of an overhaul.

What drove this decision

We had been wrestling with all the usual challenges of developing a new product feature on a tight schedule. Some requirements required further clarification. Web page and email designs required rounds of tweaking and tuning. Plus the Engineers were being pulled away periodically to troubleshoot and fix other, non-related bugs. And then there was the Operations team who had planned a major infrastructure upgrade that created additional chaos, especially as we depended on those same resources to deploy the new feature.

I could go on, listing distractions and blaming other parties. But the number one reason for the slippage was simply subpar product planning. The PM had not done a good job in decomposing and staging the required work. After a few months, we had created some great new software but no shippable product.

The decision: Drop the feature from the next major product release and reinvest in some proper scoping

About a month before the next big release, it had become increasingly clear that we would not finish developing the feature in time to validate it thoroughly with our users. In our culture, we place a high value on both sticking to our release dates and only shipping product features that are user tested. The first constraint often governs the second.

The decision to drop the feature at this point in the release schedule may have been a bit precarious. But it was really only the first step in a series of related actions that kept the Product and Engineering teams busy for some time.

Plan of attack

As it turned out, our poor product planning had resulted in even more work than you might expect from what seems like a straightforward decision. We desperately needed to put this particular feature back on the right path but there were now some additional tasks that would enter the work stream.

REVERT TO THE OLD FEATURE

So confident had we been in our ability to complete this feature (including functional, performance, and user testing) that the team had started dismantling the legacy functionality.

Now we had to re-mantle it. Or more specifically, we had to back out some of the new enhancements in order to restore the old feature. No self-respecting Engineer enjoys this kind of work and indeed it cost the Product Team dearly in terms of credibility.

ADD A FEATURE TOGGLE

In order to preserve the progress that had been made, we invested some additional time in creating a true feature toggle that would allow us to enable the new version for individual customers. This provided us with the option to conditionally test the new functionality, as soon as it was available, with some of our more curious and perhaps more forgiving users.

RE-SCOPE AND RE-PLAN THE FEATURE

The bulk of the work came from decomposing the originally planned feature into smaller stories and creating a revised, incremental delivery plan that would help us deliver a true MVP first and then, in subsequent iterations, a series of progressive improvements.

Flickr image source: http://tinyurl.com/on6nch5

I will not use this report to describe the detailed process of scoping a product feature. There are many good discussions around discovering the Minimum Viable Product or MVP. To be sure, it takes practice to learn how to be ruthless. Good PMs find ways to whittle away at a product or feature until there are no nonessential elements remaining.

But it takes a different set of skills to then carefully plan the gradual introduction of those elements over time. It can be tempting to load up on enhancements, especially as product adoption increases among customers and users. Discipline and care are traits to be nurtured and celebrated in your Product Managers.

RESET EXPECTATIONS

As I mentioned earlier, this feature, when complete, is intended to make a big splash with customers. In making the decision to postpone its deployment, I now had the delicate task of resetting expectations with our Sales team, with Professional Services and Support teams, and with the few customers and prospects we had been engaging with for testing and research.

The impact

The existing customers and new prospects that were pining for this feature were disappointed with the decision to pull and postpone though I believe the blow was cushioned in two ways. First, we notified them of our product decision several weeks ahead of the release instead of only days before or even worse, after the release.

Second, we took care to explain that we did not feel comfortable pushing a significant new product feature without appropriate user testing. Not to say that this relieved all the anxiety but it is more difficult to argue with the choice of product quality over speed to market.

One final note. The Product team did consider moving ahead with a nearly complete version of the new feature and including it in the upcoming release. The thought was that we would have the ability to turn it on for a few, select customers in exchange for some heavy usability testing. I will publish more on that approach in a separate article.

Look for more reports from theProductPath around planning products and releases, MVPs, and product culture here on PM Decisions.

More articles from our blog PM Decisions

Create an in-person event to engage your customers

In realizing I had not spoken directly with our customers in some time, I decided it was time to get out of the building.

The Product Decision: Leave the building (and the city) and spend some quality face time with a group of customers who represented a good cross section of our users.

Image source: https://www.flickr.com/photos/highwaysagency/5997001123/

In realizing I had not spoken directly with our customers in some time, I decided it was time to get out of the building.

With a major software release right around the corner and being short a Product Manager due to a recent exit, the team was justifiably busy. There was plenty to do with making sure the upcoming deployment went smoothly while also firming up our plans for the very next release. And then there were the myriad other tasks that were on our plate, making it easy to rationalize that there simply was no time in the schedule to step away to talk to our customers.

But let's be honest here. By not making the time to engage your customers, all your other product plans are likely to fail.

What drove this decision

I was starting to get that gnaw in my stomach, the one that comes from making too many uninformed decisions. Because, no matter how confident you may be in setting the direction of your product(s), there is nothing more validating than getting direct feedback from your customer base.

Our Product team had been fairly diligent about reaching out to our users on a regular basis but many of those conversations are virtual and there is often a communication gap with phone calls and web-based meetings. I was eager to get some good face-to-face interaction with our target customers, to hear what they liked about our software, to learn what we could do to help them accomplish more, and to share where we were headed with our new roadmap.

Image source: https://www.flickr.com/photos/mdd/9890924226

The decision: Leave the building (and the city) and spend some quality face time with a group of customers representing a good cross section of our users.

As hesitant as I was about disappearing from the office for a few days, I knew the in-person discussions would more than compensate. And the plan for this particular trip was to spend a half-day with more than just 1 or 2 customers, so I knew I would return with a good assortment of responses to share with the team.

Plan of attack

Our Customer Success team is in the (good) habit of reaching out to new and existing customers in order to follow up with ongoing implementation initiatives and also to promote the Art of the Possible. We decided to make this event a twofer, extending their standard agenda with a short, product-specific program.

Build Simple Customer Profiles

Before "leaving the building", the first order of business was to gather some intel about the attendees with whom I would be meeting. Together with our Sales and Professional Services teams, I put together some brief customer profiles, paying specific attention to their specific use case and, as a crude gauge of their comfort level with the product, how long they had been users of our system.

This gave me some good, initial context for the level of discussion I would likely be having and helped me refine the scope of the topics I could cover.

Create an Engaging Presentation

I know that very few people look forward to watching a PowerPoint presentation but, when done well, a good slide deck can be an ideal way to communicate ideas with a large audience.

For this event, I pulled together a series of slides that I thought would keep their attention and, more specifically, prompt some honest discussion among the group. For example, I included in the deck:

- A high-level, vendor's perspective of their business problem, showing how the company views their particular customer scenarios and intended to trigger dialog around exceptions and edge cases;

- Annotated screenshots of recently-introduced product features that they may not have had a chance to play with but would take too much time to demo completely, meant to spark conversation around additional exploration and use of the product;

- And a 1-page Product Roadmap that communicated, in broad strokes and without specific dates, the priority of particular product initiatives related to their use cases, designed to stimulate feedback and confirm we were on the right track.

Days before the event, I reviewed the material with my team, incorporating some great, internal feedback while also rehearsing the presentation flow.

Demo, Survey, Preview, Recruit

There was a lot to squeeze into the time slot we had carved out for my Product talk. I wanted to promote the current state of the product and explain how and where we were looking to make enhancements. I had brought along some early, high fidelity prototypes in case we hit on a particularly popular or sensitive topic. I also was hoping to conduct a brief survey to get feedback on a number of our product roadmap intentions. And finally, I had hoped to recruit some of the customers into our more formal "labs" program so we could follow up with more intense analysis.

Other than a few weather-related, last minute cancellations, the meeting went off without a hitch. The participants seemed pleased with having direct access to the Product organization and appreciated the brief glimpse into the company's product strategy. Many of them have since enrolled in the more structured labs program and have indicated a strong preference to stay engaged with us.

Share Results With the Product Team

I returned to the office the next week eager to review the notes with the Product team. And while it seems there is never sufficient time to fully digest results of customer interviews and surveys, I was able to share a synopsis of the event and fold much of the feedback into our (still crude) research database.

The impact

The good news is that the majority of the feedback validated our current product plans, at least for the next few major releases. It is certainly helpful to hear from your own customers that the product is indeed solving their needs. There were some familiar "hot spots" and valid complaints from the group and this has led to some re-prioritization work on our side that might not have been prompted by an interview with a single customer.

I strongly suspect there will be more such events in our future and that, as we continue to strengthen our outreach efforts, we will find it increasingly easier to get out of the building.

Look for more reports from theProductPath around customer research, product validation, and voice of the customer here on PM Decisions.

To address one of the most critical problem areas for customers using our software platform, I decided to roll up my sleeves and conduct the fieldwork myself.

The Product Decision: Enlist the help of our User Research expert to coordinate formal interviews with a suitable group of administrators and end users from our active customer base.